by Popa Adrian Marius (noreply@blogger.com) at June 06, 2026 08:20 AM

by Popa Adrian Marius (noreply@blogger.com) at June 06, 2026 08:20 AM

Berlin, 5 June 2026 – The Document Foundation today announced the release of LibreOffice 26.2.4, the fourth maintenance update to the LibreOffice 26.2 branch. Building on the major feature release published on February 4, 2026, this update delivers targeted bug fixes and stability improvements contributed by a global community of developers and QA engineers.

LibreOffice 26.2.4 is available for immediate download at libreoffice.org/download/ for Windows, macOS, and Linux.

Users of LibreOffice 25.8.x should update to LibreOffice 26.2.4 as LibreOffice 25.8 branch will reach end of life on June 12, and after that date the software will not receive security updates. In late August 2026, The Document Foundation will announce LibreOffice 26.8.

LibreOffice 26.2 introduced a broad set of improvements to daily productivity workflows, including Markdown import and export, connector shapes in Calc, multi-user Base, faster EPUB export, and mandatory Skia rendering on macOS and Windows for better graphics performance. LibreOffice 26.2.4 consolidates these advances with a focused set of fixes, addressing issues identified by users and testers since the initial release.

List of fixes in RC1: wiki.documentfoundation.org/Releases/26.2.4/RC1. List of fixes in RC2: wiki.documentfoundation.org/Releases/26.2.4/RC2.

List of fixes in RC1: wiki.documentfoundation.org/Releases/26.2.4/RC1. List of fixes in RC2: wiki.documentfoundation.org/Releases/26.2.4/RC2.

LibreOffice users, free software advocates and community members can support The Document Foundation and the LibreOffice project with a donation at www.libreoffice.org/donate.

When a public administration is told its documents are stored in “an ISO standard format,” the assumption is reasonable: an ISO standard ought to be a clean, implementable specification that any qualified software vendor can support. Standards exist precisely so that nobody is locked to a single supplier.

When a public administration is told its documents are stored in “an ISO standard format,” the assumption is reasonable: an ISO standard ought to be a clean, implementable specification that any qualified software vendor can support. Standards exist precisely so that nobody is locked to a single supplier.

OOXML — ISO/IEC 29500, the format behind Microsoft’s docx, xlsx and pptx files — does not work this way.

The standard is split into two conformance classes. Strict is the clean version: a modern document format, free of legacy baggage, that an independent implementer could reasonably support. Transitional is everything else: a vast catalogue of compatibility features, deprecated elements, platform-specific behaviours, and references to undocumented quirks of Microsoft Office versions from the 1990s. The Transitional class exists to ensure that documents converted from the old binary doc, xls and ppt formats can be represented in XML without loss.

There is one detail that matters above all others: Microsoft Office has never produced Strict OOXML by default. The option to save in Strict format is available in the installed desktop applications but is absent from the browser-based versions of Microsoft 365 — and Microsoft’s various editions have long differed in which features they offer, with the macOS version historically providing a different set of options from the Windows version. The “ISO standard” that public administrations are actually storing their documents in, when they use Office, is Transitional — the messy one. Strict is a feature you can find if you know where to look, on the platforms where Microsoft has chosen to support it. That is not the treatment a serious open standard receives.

This has consequences that go well beyond a technicality.

The standard codifies undocumented legacy behaviour. Transitional OOXML contains compatibility flags whose specification amounts to “behave like Word 95” or “lay out footnotes like Word 97.” These are not formal definitions. They are references to the behaviour of specific commercial software products released more than thirty years ago — products whose layout algorithms were never published. An independent implementer wishing to render such a document correctly must reverse-engineer software from the Windows 95 era. This is not standardisation in any meaningful sense; it is the codification of one vendor’s implementation history as a global norm.

The standard perpetuates known bugs. Excel famously treats 1900 as a leap year — it was not — because Lotus 1-2-3 did so in the 1980s, and Microsoft chose binary compatibility with Lotus over arithmetic correctness [1]. OOXML Transitional preserves this bug. The default workbook setting in every xlsx file you have ever opened encodes a date arithmetic error from the era of MS-DOS. A spreadsheet calculating durations across February 1900 will produce wrong answers, and the standard requires this.

The standard includes obsolete graphics formats. Vector Markup Language (VML) was submitted by Microsoft to the W3C in 1998 as a candidate vector graphics standard. The W3C rejected it in favour of SVG. VML should have died there. Instead, it lives on inside OOXML Transitional, because documents converted from doc files contain it, and Microsoft Office continues to emit it. Implementers must support both VML and its modern replacement, DrawingML, to handle real-world files.

The conformance class problem is structural. Strict was meant to be the future and Transitional the temporary bridge. Two decades after standardisation, Transitional remains what Office produces, what users receive, and what any competing implementation must support to be useful. The clean standard exists on paper. The standard that exists in practice — and that Microsoft Office produces by default — is the messy one.

For public administrations, this matters in three specific ways.

For archives. A document format that depends on undocumented behaviour of 1990s applications is not a safe long-term archival format. The ISO label provides a false reassurance: the parts of the standard your documents actually use are precisely the parts that are least specified and most dependent on a single vendor’s tooling.

For procurement. Specifying “ISO/IEC 29500” in a tender does not guarantee interoperability or vendor neutrality. It guarantees that documents will conform to a specification of which the practically deployed variant is, in effect, whatever Microsoft Office does. This is the opposite of what an open standard is meant to deliver.

For sovereignty. European institutions, national governments, and regional administrations increasingly recognise that the choice of document format is a sovereignty question. A format whose definitive reference implementation is a single American company’s commercial product cannot serve as the technical foundation of European digital autonomy — whatever its ISO number.

The alternative is not hypothetical. The OpenDocument Format (ODF), ratified as ISO/IEC 26300 twenty years ago this month, was designed from the outset as an implementer-neutral standard. Its specification is complete, self-contained, and does not require knowledge of any specific commercial product’s history. Multiple independent implementations exist. It is, in the proper sense of the term, an open standard.

For administrations weighing format policy, the question is not whether OOXML is “a standard.” It is. The question is what compliance with that standard actually entails, what it demands of implementers, and whether that serves the long-term interests of the institutions storing their work in it.

For those interested in the technical detail behind these claims, we attach a companion deep-dive [2] cataloguing the Transitional features, their categories, and the specific structural problems they introduce.

[1] The history of the 1900 leap year bug is well documented. Joel Spolsky, who worked on the Excel team at Microsoft in the early 1990s, recounted in My First BillG Review how Excel inherited the bug from Lotus 1-2-3 to preserve binary compatibility. Microsoft’s own support documentation openly acknowledges the bug and explains why it will not be fixed: doing so would invalidate every date in every existing Excel worksheet.

[2] The companion deep dive document in PDF format, cataloguing the Transitional features, their categories, and the specific structural problems they introduce: A Standard in Name Only a Deep Dive

LibreOffice is made by hundreds of people around the world, working on code, documentation, QA, translations, marketing, infrastructure and much more. Coordinating the project’s activities is the team at The Document Foundation, the non-profit behind LibreOffice. Let’s see what the team members do:

Christian’s typical tasks include taking care of the continuous integration system (both the automation server and the build machines), managing the LibreOffice release process, handling app store updates with all the paperwork that entails, managing the technical side of language translations not only for LibreOffice, but for any translatable system we have and making sure our integration with payment platforms works smoothly. He has also been involved in creating and maintaining websites and web services.

Christian’s work is influencing the developer experience as well. In the past, LibreOffice’s Windows development setup was somewhat messy. After Christian introduced automation into the setup process with the help of WinGet scripts, there has been much less need for troubleshooting.

Dan was involved in the Mac port back in the 2000s when LibreOffice was still called OpenOffice.org. For some months now he has been working for TDF on user interface and macOS tasks. He has done corrections to the handling of system UI themes, implemented support for special macOS keyboard shortcuts and macOS-specific menu items, fixed database links going missing from .ods files, and fixed an issue with printing notes from Impress presentations on macOS. His ongoing work includes experimenting with Qt UI on macOS and reworking the code for Notebookbar.

Florian is one of the founders of TDF, and its Executive Director since 2014. He manages our worldwide team of 18 people, and deals with a variety of tasks in accounting, financials, taxes, budget, payroll, annual audit, banking, legal topics, employment and HR. He supports the board and the membership committee and onboards those new in office. He regularly gives presentations at events, is active in the German community and has written extensively about the tasks he is involved on our forum.

Guilhem is managing our servers and the approximately twenty web services needed every day by LibreOffice users and contributors. Major updates to the operating systems and the web applications require careful studying of what needs to be taken into account to ensure everything keeps operating smoothly. Often this goes into the level of studying individual code changes. Compatibility breakage has to be mitigated or at least communicated.

Heiko is collaborating with user experience design volunteers in planning improvements to LibreOffice. Not being content with planning, he then goes and implements the proposals, either by himself or with help from others. Heiko always denies being a C++ developer yet inexplicably has over 700 LibreOffice code changes in his name. He has mentored in over a dozen Google Summer of Code (GSoC) and Outreachy projects, for example in the reworking of Table Styles and UI theming. Being an active mentor means that he is doing code reviews for new developers all year round as well as inventing new easy tasks.

A recent large-scale project of his is implementing vertical tabs in dialogs.

Over a hundred developers get their start in LibreOffice code every year. Facing seven million lines of code can be intimidating, so we have a tradition of providing a selection of tasks we call “easy hacks“. Hossein is tending to this catalogue of beginner tasks and reviewing the submitted code changes. Whenever a new developer has issues with setting up a development environment, he jumps in to help. He is also writing developer documentation on the TDF wiki and publishing blog posts about development.

He has mentored GSoC projects such as cross platform bindings for .NET and Python code auto-completion. His recent contributions include initial support for Qt 6 UI on Windows together with Michael Weghorn, based on earlier work by Jan-Marek Glogowski.

Ilmari is bringing in new contributors to quality assurance, design, C++ development and documentation. In a typical year he teaches nearly 200 people about getting involved in LibreOffice. He is also triaging (and sometimes fixing) bugs, doing web development, maintaining the wiki, doing code reviews and managing internship programs.

Italo Vignoli is a founding member of The Document Foundation and the LibreOffice project, the Chairman Emeritus of Associazione LibreItalia, an Ambassador of Software Heritage, and a proud member of Free Software Foundation Europe (FSFE). He is a past board member of Open Source Initiative (OSI). Italo co-leads LibreOffice marketing, PR and media relations, co-chairs the LibreOffice Certification Program, and is a spokesman for the project. He also handles advocacy and marketing activities for the Open Document Format ISO standard.

For the past two years Jonathan has been working on LibreOffice features in the categories of right-to-left scripts, complex text layout and Chinese-Japanese-Korean. In addition to numerous quality of life improvements, he has implemented support for Start/End paragraph alignment while making it the default instead of Left/Right, and made the CJK text grid compatible with Microsoft Word. On the mentoring side he is constantly reviewing code submissions from newcomers and was involved in the BASIC IDE object browser GSoC project.

Jonathan is currently looking into fundamental improvements in the LibreOffice user interface.

As mentioned earlier, TDF hosts a rather large number of web applications, some of them created from scratch. These custom web services include the Extensions and Templates site and the Crash Report site. Juan José has been heavily involved in redesigning and maintaining these two sites. He has also worked on sites for various LibreOffice conferences, improved our localisation tooling and created tools to combat spam in our forums.

TDF wants LibreOffice to be easy to use for visually impaired people, and three years ago Michael was hired to make sure we always deliver accessible software. LibreOffice has lots of variety in its content types and user interface widgets. This means that we are sometimes testing the limits of accessibility APIs, which are also different per operating system. To ensure optimal results in LibreOffice accessibility, Michael is working with developers of toolkits such as GTK and Qt, and with developers of screen-reader applications such as Orca and NVDA.

At the moment Michael is working to bring LibreOffice’s Qt user interface support to the next level and seeing how it works on Windows.

Mike is a long-time Linux and free software journalist, and joined the team in 2016 to work in the areas of marketing and community outreach. He helps to maintain the LibreOffice social media channels, interacting with users to encourage them to join the project and contribute. He also interviews community members, writes blog posts, works on videos and podcasts, and organises events.

Neil joined the team a couple of months ago to improve the scripting and API side of LibreOffice. He has implemented a new approach for Lua UNO API bindings, added QuickJS-based JavaScript bindings together with Stephan Bergmann and made it possible to create and edit Python macros via the Macro Organizer dialog.

Neil will also be collaborating with Michael Weghorn on user interface renovation projects.

Olivier started contributing back in the OpenOffice.org days in 2001 as part of the Brazilian community and is one of the founding members of TDF. For ten years he has been leading the documentation effort for LibreOffice. The documentation team maintains several guide books, a huge collection of help articles, wiki pages and even tooltip texts seen within LibreOffice itself. Olivier has opinions on writing good release notes and is not shy to share them!

Olivier is also fixing UI issues and making sure everything works with regards to localisation.

Sophie has been in the LibreOffice project since the beginning (and in OpenOffice.org before that), and helps with TDF administration tasks, such as organising meetings and managing the travel refund tool. In addition, she helps to organise the yearly LibreOffice Conference, and works with the localisation communities to make LibreOffice available in as many languages as possible.

Stephan helps with administrative tasks for the foundation, such as meeting minutes, accounting reports, donation queries, travel bookings, travel expense reimbursements, ordering equipment, issuing donation receipts, payment processing, and translations.

Having started about a month ago, Vissarion will focus on taking Base to the next level. The current development plan includes finishing the new Report Builder, polishing Firebird support and adding support for SQLite.

Xisco did a Google Summer of Code project for LibreOffice in 2011 and joined the TDF team in 2016 to work on QA (quality assurance). At first he was triaging bugs, but gradually moved to writing automated tests. By now he has added thousands of tests. He keeps LibreOffice’s hundred external dependencies up to date, fixes critical bugs, improves graphics support, helps with the release process, is involved in reviewing security reports and handles the crash report system alongside other automated systems related to guarding the quality of the software. He also mentors GSoC projects.

As mentioned, the team is just a small part of the overall LibreOffice community. Everyone is welcome to find out what you can do for LibreOffice – to learn new skills, meet new people, and be part of a project making software used by millions of people around the world!

This proposal suggests restarting LibreOffice web, mobile, and cloud development by structuring the project into a set of independent initiatives. Each initiative can be pursued separately from the others, and their deliverables will be useful improvements to LibreOffice even without the other components.

• Responsive user interface

• Web distribution based on desktop version using WebAssembly

• Mobile distributions based on desktop version

• Document server and integration

• Client-server collaborative editing

One of the greatest risks to large software projects is schedule slip due to dependencies between components. By structuring the project as independent initiatives with separate deliverables, rather than a single monolithic project, we can reduce that risk. This approach also calls for a high level of code sharing across the desktop, web, and mobile versions, which will reduce both our initial development and long-term code maintenance costs.

The result of this project will be a blended web, mobile, and cloud offering and development strategy, which will signal to the public that LibreOffice is on a clear trajectory toward achieving technical parity with the major commercial office suites. In lieu of invasive first-party cloud service integrations, we will aim to offer server components that are lightweight and inexpensive to host, and make it easy for users to work with multiple server providers.

Please note that this document is intended as a strategy proposal, not as a technical specification or project plan. Technical and planning commentary in this document should be considered speculative. Additional work is needed to prepare concrete implementation plans for each initiative, should we choose to proceed with this strategy.

Due to the nature of our project, we have relatively little visibility into the needs of our end users. We also have limited resources to conduct primary market research, in part out of consideration for user privacy. Most of our institutional understanding of end user needs comes from engaged community members who volunteer their time to advocate for their particular interests, which may not be representative of larger populations.

Rather than investigate the needs of end users directly, we can instead borrow from economics and examine the revealed preferences of consumers: if a great majority of people select one product over its alternatives, ceteris paribus, we may safely assume those people prefer that product. Thus, the features our major competitors use to distinguish themselves can serve as signposts for what users consider when choosing between cloud-enabled office suites.

One special case is the group of users who are invested in deploying and operating cloud-enabled office suites. This category ranges from institutional IT decision-makers, to on-premises cloud software vendors such as Nextcloud.

The Document Foundation has not been previously involved with developing or marketing a cloud-enabled office suite. As a result, we have few direct contacts we can use in order to gather requirements. However, we may be able to draw some conclusions about what this category of consumer wants based on public comments and prevailing economic and regulatory conditions.

For server operators, the world looks quite different today than it did when the LibreOffice project was founded. Application hosting costs have risen dramatically, driven by a complex interaction of increasing energy costs, server component supply chain disruptions, excess demand due to AI speculation, and vendor consolidation. We can no longer expect users to host applications that perform unnecessary computation inside the datacenter, where space, hardware, and energy are all at their most expensive – and are needed for other business activities.

In addition to more immediate financial concerns, software sustainability / “green coding” has continued to develop among policy, government procurement, and investor risk management (ESG) circles. For one concrete example, the 2024 French RGESN V2 (“Référentiel général d’écoconception de services numériques”) mandates software eco-design principles and resource efficiency for certain types of public procurement. Many other jurisdictions are developing similar regulations, including Germany and the UK.

In order for a LibreOffice cloud initiative to succeed, we must at minimum offer software that server operators can afford to host. While these macroeconomic conditions are still evolving, it seems clear enough that service providers will grow increasingly sensitive to operating costs, and will prefer applications that require less energy, bandwidth, and system memory in the short term. As there is currently no energy-efficient cloud office suite based on open document standards, it is possible that open standard adoption will be impaired should we fail to provide one.

The cloud-enabled office suite market is overwhelmingly dominated by two competitors: Microsoft and Google. Their products are closed-source, distributed under restrictive terms, lack on-premises hosting [1], and are tied to proprietary document formats. Combined, Microsoft and Google capture roughly 96% of the total addressable market. The remaining 4% is divided among a long tail of small vendors, with office suite products that range from the purpose-built for specific national markets, to nascent general-purpose suites that have yet to achieve product-market fit. Market shares for firms within this 4% long tail are too low to individually estimate with any accuracy.

We are all familiar with this breakdown, but it does not go without saying. It takes conscious effort to maintain a clear perspective about a global market. Due to our history, we have interacted with office suite projects from the long tail of this market more than we have interacted with the market leaders. This history risks leading us to focus on the wrong problems.

In order to achieve the goals of our foundation, we need to reset our expectations. Revealed consumer preferences suggest there are only two cloud-enabled office suites that offer what users need: those of Microsoft and Google. We should aim high, and plan with the intention that we will provide credible alternatives for Microsoft and Google products that comply with our values.

Distinguishing features

It is Microsoft Office

Microsoft Office is considered the default office suite by most prospective users, and the Microsoft 365 web offering benefits from this association.

Feature-limited web version with streamlined user interface

Much like their sole competitor, the Microsoft 365 web versions offer a greatly simplified user experience which is optimal for everyday, quick document authoring. The user interface is stripped down, but looks visually similar enough to the desktop applications to be familiar to experienced users.

Full-featured desktop versions available for advanced users

The Microsoft 365 web versions do not replace the classic desktop versions. Both versions are provided to users, and the web version guides users to open documents in the desktop version for editing.

Cross-platform collaboration between web and desktop

Collaboration and cloud features are usable from both the web and desktop versions. Collaboration requires documents to be stored on either OneDrive or SharePoint.

Weaknesses

Web versions are based on a different codebase

Although the Microsoft 365 web applications visually resemble their desktop counterparts, to our understanding they are greenfield efforts. The web versions suffer from interoperability issues with the desktop versions, prompting user complaints.

Web versions are feature-incomplete

The Microsoft 365 web applications are missing features that are present in the desktop versions. Some of these features are obscure, but many aren’t (for example, dragging images to move anchors). The web version compensates for this by offering an easy transition to the desktop version for more intensive editing work.

No on-premises option

Since Microsoft discontinued the Office Online Server, it is no longer possible to host the web version locally. Using the web version requires Microsoft cloud services.

Limited data control

Microsoft 365 allows local and on-premises document storage (SharePoint). However, using collaboration features requires communication with Microsoft cloud services, even if the document is hosted on premises.

Distinguishing features

Web-native

Google Workspace is a web application. It loads quickly, and the user interface is highly responsive.

Simple, streamlined user interface

As with Microsoft 365’s web versions, Google Workspace offers a feature-limited and streamlined user experience which is optimized for simple document editing tasks.

Ubiquitous

Google Workspace is tied/bundled with Google’s other services. It is automatically available to any user who has a Gmail account. Sharing and collaboration is as easy as sending an e-mail.

Documents aren’t files

Within Google Workspace, documents exist as abstract entities in a persistent cloud. Documents are always stored on the server in Google proprietary document formats.

Disadvantages

No native desktop version

Google Workspace is designed around a persistent internet connection. The primary application is a web application hosted on Google servers. The mobile versions are hosted locally, but have artificially limited offline modes.

Feature set is extremely limited

Google Workspace is missing all but the most trivial document formatting features. Although this is sufficient for many use cases, it is not a complete office solution. In practice, Google Workspace must be supplemented with standalone Microsoft Office licenses in commercial deployments.

No on-premises option

Google Workspace is a cloud-native web application. It was designed around Google’s cloud services, and cannot be separated from them.

No data control

Google Workspace does not allow local or on-premises document storage. Documents cannot be viewed or edited without uploading them to Google’s servers. For regulatory compliance reasons, Google Workspace allows on-premises backup of cloud documents, but there is no official way to restore those backups.

LibreOffice is the most successful free and open source office suite. Our brand is valuable, and our user base is dedicated. While we do not have an advantage over Microsoft in this area, this is also not a weak starting position. Many users and organizations will evaluate our offering simply due to name recognition. It is therefore crucial to avoid tying our brand identity to products or technical approaches that do not show clear trajectory toward meeting the needs of users and operators.

On the desktop, we have long considered Microsoft Office interoperability a key obstacle for broader LibreOffice adoption. This assumption does not apply to the cloud-enabled segment. Google Workspace has achieved a large market share despite lacking support for Microsoft Office document formats (only lossy import and export). If Google Workspace is not hindered by their Microsoft-incompatible document models based on proprietary file formats, we will not be hindered by ours based on open standards.

With cloud-enabled office suites, document exchange between users of different office suites is achieved by sharing links that can be opened in standard web browsers. This is important to support.

By reusing the existing LibreOffice source code to drive the web version, we can avoid the compatibility issues and feature set limitations present in the major competing products. A feature-limited user experience is then a choice we can allow users to make, rather than forcing it on users due to implementation strategy.

Both major competitors treat their web versions as a secondary workflow, to be supplemented with a complete desktop office suite. Their user interfaces are optimized for quick viewing and editing, either on a secondary device or while quickly browsing files stored in a cloud storage application. We should consider also displaying such a streamlined user interface, at least by default; both major competitors collect user telemetry, so it is reasonable to suppose their decision was evidence-based.

This is a key differentiator for Microsoft 365. We should provide the same capabilities. All cloud-based features should be equally usable from the desktop version as the web version.

Users can interact with Microsoft 365 and Google Workspace documents without blocking on client-server communication. Editing is smooth, and has a near-desktop feel. We should aim to provide a similar user experience.

Neither major competitor offers on-premises options for hosting or cloud services. This is an area where we can distinguish ourselves, but it is also a challenge. By privileging their own cloud services, Microsoft 365 and Google Workspace can simplify distribution and make cloud features available to users regardless of technical expertise.

In order to close this capability gap, we should design toward a world of many small clouds. We should encourage the proliferation of LibreOffice server components by designing them to be easy and inexpensive to host. Our client-server architecture should be designed to respect the limited computational and bandwidth resources of small cloud operators, and we should perform all expensive computations on the client side.

The desktop application should be designed with the assumption that users will adopt multiple cloud providers for different purposes, including on an ad hoc basis for one-time document collaboration.

Developing a web and cloud product is a major undertaking. In order to minimize project risk, this development plan is based around decomposing the project into multiple independent initiatives. Each initiative will have separate milestones and deliverables. We must complete all initiatives in order to have a competitive cloud strategy, but each initiative is an independent useful feature.

LibreOffice already offers multiple user interface styles. This initiative will expand on that prior work to offer a new optional user interface mode which is optimized for web and touch-based devices. The user interface should scale appropriately based on window dimensions, and should make uncommon actions possible, if not easy.

Specific user interface design and evaluation will be conducted as part of this initiative. This work should include closer studies of our major competitors.

Once the responsive user interface implementation is complete, it will be used as the default configuration for both the web and mobile distributions.

We already have a working prototype of LibreOffice built for web browsers, which uses Qt and WebAssembly. This prototype is still in a rough state, but it demonstrates it is possible to create a version of LibreOffice for web which does not require large-scale duplication of effort or resource-intensive server components.

This initiative will build upon this WebAssembly prototype. Since the WebAssembly prototype already works, initial efforts in this area will mostly focus on polish and packaging, in order to create a minimally viable web-deployable version of LibreOffice.

This initiative will build upon ongoing research efforts to standardize on the Qt 6 VCL backend. The initial focus will be creating some minimally functioning builds of the desktop version of LibreOffice for Android and iOS emulators. Once working, these versions can be incrementally improved.

LibreOffice already supports a variety of remote file services. This initiative will build upon that prior work to introduce an easy-to-host LibreOffice first-party document server. This initiative will also include creating a more streamlined user experience for interacting with these servers.

This initiative will include research to identify best practices and any open standards we can adopt. The document server should be designed in a manner that can be easily extended or incorporated into other services.

This initiative will study and incrementally implement client-server collaborative editing in the LibreOffice desktop version. For development purposes, we will initially use direct TCP/IP connections between LibreOffice instances. Eventually, the document server will be modified to coordinate collaboration and act as a proxy between clients.

There are outstanding proposals to develop peer-to-peer collaboration, in addition to adopting other distributed networking and file sharing technologies. That is an excellent vision for LibreOffice. However, that vision touches on many active research areas in computer science. At this time, it is not entirely clear how we should best approach executing on those proposals.

In order to reduce total project risk, this proposal suggests first implementing collaboration using a client-server network architecture, with a single authoritative state.

Support for client-server collaboration is not exclusive of peer-to-peer collaboration. The software changes we make to support client-server collaboration are also necessary for peer-to-peer collaboration. By making these changes separate of the hard peer-to-peer research problems, we will reduce the risk of a future peer-to-peer project and make it more attractive for development.

[1] Microsoft Office Online Server was discontinued in October 2025.

UPDATE: We have opened a discussion here: https://community.documentfoundation.org/t/web-and-mobile-development-strategy-proposal/13729

A number of journalists read last week’s piece as an attack on Microsoft. We want to explain what they walked past.

Whenever we address the contrast between ODF and OOXML, some people perceive it as a campaign against a company. It is not. We are trying to do something far more useful: to make the structural problem with the standard document format clear to those who have to live with it: public officials, educators, and above all, individual citizens.

Whenever we address the contrast between ODF and OOXML, some people perceive it as a campaign against a company. It is not. We are trying to do something far more useful: to make the structural problem with the standard document format clear to those who have to live with it: public officials, educators, and above all, individual citizens.

All these people find themselves facing a problem they did not create, but which affects them daily, and of which they are often the unwitting victims, every time they create a document or receive one.

The least we can do – and in fact we have been doing it for twenty years, though until now almost no one has listened – is to explain, clearly and without drama, how the problem arose, why it persists, and why ODF is the only way out. It is an educational and selfless goal – we do not sell software, so we have no commercial interest to protect – and not an attack on a company.

The problem concerns the current document landscape, based almost exclusively on a proprietary format controlled by a single company, and what we could have had instead: a standard format controlled by an independent community of stakeholders.

Microsoft features in this story because of the rational-monopolist behaviour it has exhibited since 2006, during and after the standardization of the proprietary OOXML format: first promising the standard and then doing everything possible to ensure it was first ignored and then forgotten, quietly but with extreme determination. All of this to protect a market share now worth over $30 billion, which would have been at risk of erosion if the document format had been genuinely standardized: migration to any other office suite would then have been free of cost and complexity.

Today, most organizations – public agencies, supranational bodies, companies – and most individual users face a problem that, had everyone listened to independent experts between 2006 and 2008, would never have existed. The international standards system and national governments allowed a single vendor – rather than the community of developers, systems analysts and standards scholars who raised objections – to set the terms under which documents would be archived. That vendor chose its own proprietary format.

The problem, in other words, was created by institutions – ISO, national standards bodies, public officials and ultimately politicians – who approached the choice of format for public documents in a completely uncritical manner. They trusted the process despite repeated and legitimate protests about its transparency, and never thought to perform a simple file analysis that would, in a few minutes, have raised more than a few doubts. The industry then followed the vendor’s lead, for convenience, because it expanded the business – without weighing the medium- and long-term consequences for institutions and individual users. What is troubling is that even a segment of the open-source industry went with the flow, and continues to do so, as shown by the fact that today only two open-source office suites – LibreOffice and Collabora Office – use ODF as their native file format.

If between 2006 and 2008 everyone had done their part, today there would be a single open, multi-vendor interoperability standard for office documents – our ODF – governed neutrally and implemented by all. Everyone would have benefited, because document exchange based on a true standard is completely transparent and independent of operating system and application software. Microsoft could have kept its own internal proprietary format as a mere implementation detail, invisible to users, because documents would have flowed seamlessly through the standard. An ideal world that never became reality.

Instead, the accelerated standardization of OOXML through ISO in 2008, against all technical objections, produced the OOXML Transitional format we use today: a temporary compatibility mode, explicitly defined as a bridge to be crossed once and then dismantled. It was not dismantled. It became the only variant used, at every level, by the majority of office suites. Today the vast majority of office documents worldwide – including the public documents of public institutions and of governments everywhere – are saved in a format that its own designers had declared provisional.

Even OOXML Strict would not solve the problem. Microsoft has never promoted it – part, as we have explained, of an understandable strategy – and none of those who were supposed to oversee the process ever requested or verified its implementation by the deadlines promised at standardization, from 2010 onward. But the deeper point is this: Strict is simply a different variant of the same single-vendor format. A standard is not open because its specification has been published. It is open when it is developed through a transparent process that no single company can control, and maintained by an independent community of users and implementers. Replacing Transitional with Strict changes the variant but leaves governance – which is what determines sovereignty – exactly where it was.

So when we advocate for ODF, we are not criticizing anything. We are trying to clarify a problem that was artificially created, and to ask why a problem that was artificially created is treated by most stakeholders – organizations, governments, companies and individuals – as an established fact of nature.

Attention to digital sovereignty is growing, even if resistance remains strong, because awareness of this issue – which should never have arisen in the first place – is still virtually nonexistent, not only among users but among industry professionals themselves.

We continue to believe ODF can regain the role it should have had after 2006, when it was approved – rightly – as an ISO standard, because it had every characteristic of an open standard. The Deutschland Stack restores that role to ODF, and we hope the German government’s decision will not remain isolated.

by Popa Adrian Marius (noreply@blogger.com) at May 08, 2026 02:38 PM

by Popa Adrian Marius (noreply@blogger.com) at April 24, 2026 10:43 AM

by Popa Adrian Marius (noreply@blogger.com) at April 24, 2026 06:09 AM

Git is not only broken by design, it also has some practical shortcomings around git-format-patch and git-am, as it turns out:

$ mkdir repo1$ ls -a repo1. ..$ git init -q repo1$ ls -a repo1. .. .git$ git -C repo1 commit --allow-empty -F ../subject.txt[master (root-commit) 82b1f4c] Empty test commit$ git -C repo1 log --oneline --stat82b1f4c (HEAD -> master) Empty test commit$ ls -a repo1. .. .git$ cat repo1/hello.txtcat: repo1/hello.txt: No such file or directory$ git -C repo1 format-patch -k -1 HEAD -o ..../0001-Empty-test-commit.patch$ rm -fr repo1$ mkdir repo2$ ls -a repo2. ..$ git init -q repo2$ ls -a repo2. .. .git$ cat repo2/hello.txtcat: repo2/hello.txt: No such file or directory$ git -C repo2 am -k ../0001-Empty-test-commit.patchApplying: Empty test commitapplying to an empty history$ git -C repo2 log --oneline --stat292e19c (HEAD -> master) Empty test commit hello.txt | 1 + 1 file changed, 1 insertion(+)$ ls -a repo2. .. .git hello.txt$ cat repo2/hello.txtHello from the voidWhich leaves the question, what’s the content of that subject.txt?

Want to take a guess?

See below.

$ cat subject.txtEmpty test commit--- hello.txt | 1 + 1 file changed, 1 insertion(+)diff --git a/hello.txt b/hello.txtnew file mode 100644index 0000000..479e903--- /dev/null+++ b/hello.txt@@ -0,0 +1 @@+Hello from the voidIn September 2025, I attended the LibreOffice Conference in Budapest, Hungary, on the 4th and the 5th, and a community meeting on the 3rd. Thanks to The Document Foundation (TDF) for sponsoring my travel and accommodation costs. The conference venue was Faculty of Informatics, Eötvös Loránd University (ELTE).

The conference was planned to be held from the 4th to the 6th, but the program for the 6th of September had to be canceled due to the venue being unavailable because of a marathon in Budapest. So, all the talks got squeezed into just two days, making the schedule a bit hectic.

The TDF had booked my room at the Corvin Hotel. It was a double bedroom with a window. The breakfast was included in the hotel booking. The hotel was walking distance from the conference venue. One could also take a tram from the hotel to reach the venue.

A shot of my room. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

A tram in Budapest. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

On the 3rd of September, we had a community meeting at the above-mentioned venue. I walked with my friend Dione to the venue. Upon reaching there, I noticed that the university had no boundaries and gates. This reminded me of the previous year’s conference venue in Luxembourg, which also had no boundaries or gates.

In contrast, Indian universities and institutes typically have walls and gates serving as boundaries to separate them from the rest of the city. Many of these institutes also have security guards at the entrance, who may ask attendees to present proof of admission before allowing them inside. I was surprised to find that institutes in Europe, like the one where the conference was held, did not have such boundaries.

The building where the conference was held was red, which happened to be the same color as the building for the previous year’s conference venue. I remember joking with Dione that the criteria for the conference venue might have been the color of the building.

The red building in the picture served as the conference venue. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

During the community meeting, we shared ideas on how to spread the word about LibreOffice. The meeting lasted for a couple of hours.

After the community meeting, we went to the hotel for dinner sponsored by the TDF.

These Esterházy cake bites were really yummy. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

Raspberry Currant cake slices. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

On the first day of the conference, attendees were given swag bags containing a pad, sticky notes, a pen, a conference T-shirt, and a bottle.

Conference swag. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

The talks started early in the morning with Eliane Domingos, Chairperson of TDF’s Board of Directors, giving the inauguration talk. As always, I found Italo Vignoli’s talk on the importance of document freedom interesting.

During the snack break, I noticed that there were three types of milk available for coffee: cow’s milk, lactose-free milk, and almond milk. Almond milk is rare in India, but I have managed to get it, but I have never seen lactose-free milk in India.

Since I run fundraisers in my projects, such as Prav, I could relate to Lothar K. Becker’s talk. He discussed the issue that certain implementations in LibreOffice require a budget that is too large for any single interested entity to fund independently. Furthermore, The Document Foundation (TDF) cannot legally receive funds from government entities. Therefore, there is no organization or entity to pool resources from all the interested entities to finance the implementation.

Lothar giving his presentation. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

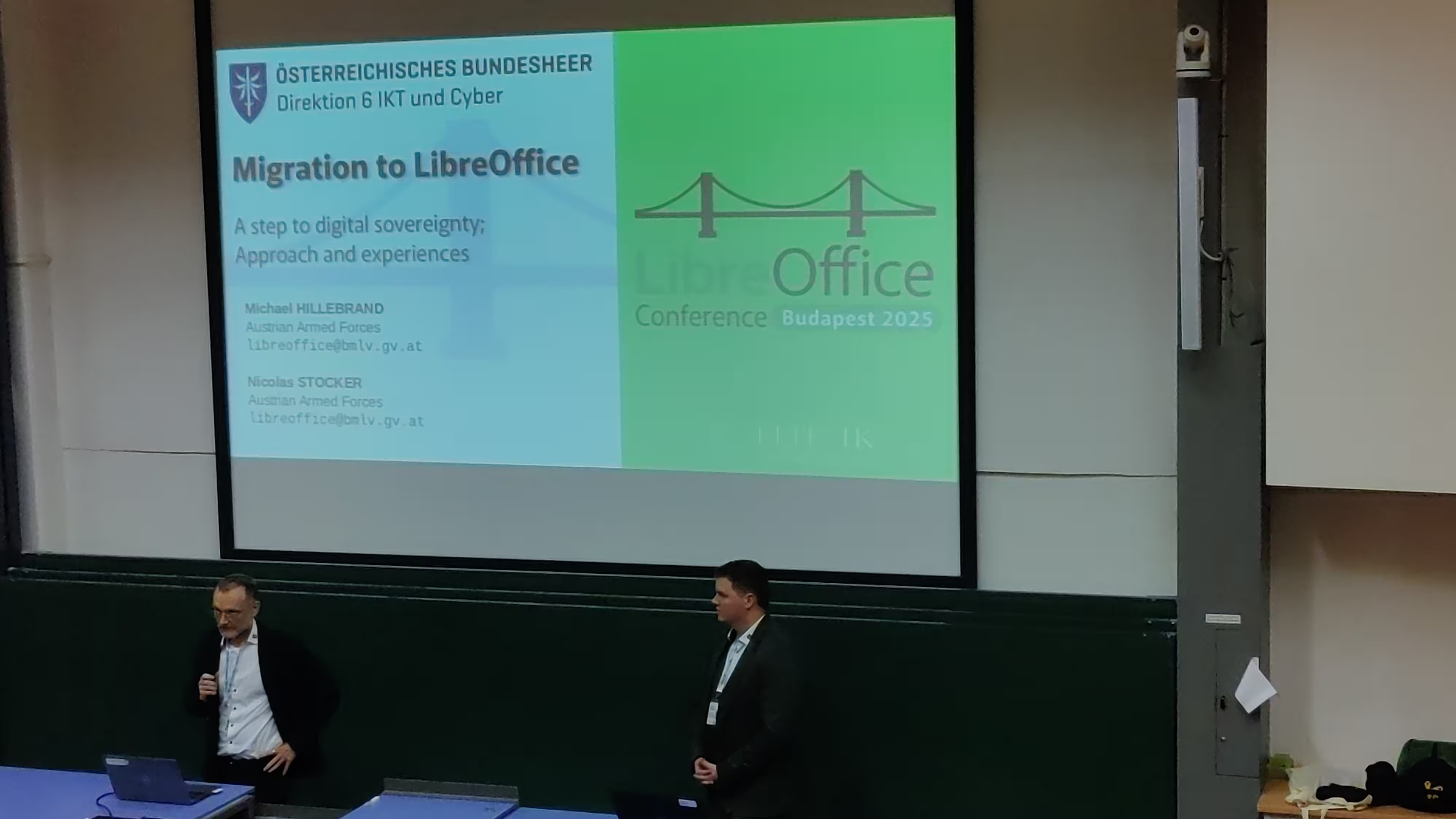

Another talk was by the Austrian Armed Forces on their migration to LibreOffice. I wanted to know why they migrated, and I found out that they did it for their digital sovereignty, and not for saving on the license costs. Another point presented in the talk was that LibreOffice is available on all the operating systems, while the Microsoft Office suite is not that widely available. The migration was systematic and was performed over a few years. They started working on it in 2021, and the migration was finished recently. In addition, it also required training their staff in using LibreOffice.

Presentation on migration to LibreOffice by Austrian Armed Forces. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

The lunch was inside the university canteen. We were provided lunch coupons by the TDF. I got a vegan coupon with 4000 Ft written on it, which meant I could take lunch for up to 4000 Hungarian forints.

My lunch ticket for the conference. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

The lunch I had on the first day. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

During the evening, it was my turn for the presentation. I was done with preparing my slides ten days before my talk. I also got my slides reviewed by friends.

My talk was finished in 20 minutes, while I was given a 30-minute slot. This helped us catch up on the schedule. Furthermore, I made my talk interactive by asking questions and making sure that the audience was not asleep. During my talk, my friend Dione took my pictures with my camera.

My talk was on how free software projects could give users a say in freedom to modify the software. I illustrated this using the Prav project that I am a part of.

After the talks were over, we were treated to a conference dinner at Trofea Grill. It had a great selection of desserts, which helped me sample some Hungarian desserts. The sponge cake was especially good.

Desserts at Tofea Grill. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

The next day—the 5th of September—I went with Dione to the venue early in the morning, as her talk was the first one of the day. Her talk was titled Managing Tasks with Nextcloud Deck. Later that day, I also attended a talk on Collabora. At lunch, I found the egg white salad quite tasty.

Dione giving her presentation. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

Egg white salad. Photo by Ravi Dwivedi, released under CC-BY-SA 4.0.

After the lunch break, we had the conference group photo. I had a Nikon camera, which we used to take the group photo. I requested a university student to take our group photo and also taught her how to operate the camera.

Group photo

By the evening, the conference ended, after which we went to a pub, which was again sponsored by TDF. I had beer, but that one really tasted bad, so I couldn’t finish it. The only vegetarian option was goat cheeseburger, which my friend Manish and I opted for. The burger tasted awful. Apparently, I don’t like goat cheese.

The next day I went sightseeing with Dione in Budapest. Stay tuned for our adventures!

Credits: Thanks to Dione and Richard for proofreading.

I've been looking fairly closely at the coordinate system of VCL. I understood it fairly well, but as I have a Pro subscription of Gemini, I asked it to look at it for me to tell me how it thinks it works. Here is what it came up with:

If you’ve ever worked on a complex UI rendering engine, you know that placing a single pixel on the screen is rarely as simple as draw(x, y). A single coordinate actually exists in several different "realities" simultaneously.

In LibreOffice’s VCL (Visual Components Library), a coordinate has to travel through up to five distinct spatial dimensions to get from the mathematical purity of a document model to the physical LED pixels on your monitor.

If you get an offset wrong or apply a scaling factor out of order, your text disappears off the page, your borders render fuzzy, or your PDF exports break entirely. To fix these issues and modernize the rendering stack, we have to establish a strict, predictable pipeline.

Here is a deep dive into the five coordinate spaces of the LibreOffice VCL, and the math required to traverse them.

Think of these spaces as a series of nested Russian dolls. To get to the center (the document), you have to open them one by one.

This is the pure, mathematical space of the document itself.

MapMode (e.g., 1/100th of a millimeter for high-precision printing).nX or nY.This is an intermediate staging area. The coordinate is still in logical document units, but it has been intentionally shifted.

mnOutOffLogic.Welcome to the realm of pixels—specifically, pixels relative to the viewport (the scrollable area of the application).

mfMapScX / mfMapScY).mnMapOfsX / mnMapOfsY (The Mapping Offset).These are pixels relative to the GUI window frame itself.

mnOutOffOrigX / mnOutOffOrigY (The VCL Pixel Offset).The final destination. These are absolute pixels mapped to your physical hardware.

mnOutOffX / mnOutOffY (The Screen Origin).To safely traverse these spaces without causing "double-subtraction" bugs or off-by-one pixel errors, we chain the transitions together in a strict sequence.

Here is the Forward Path (converting a Document coordinate to a Physical Screen pixel):

LogicUnits = nX + mnOutOffLogicXView = (LogicUnits * mfMapScX) + mnMapOfsXWindow = View + mnOutOffOrigXDevice = Window + mnOutOffXWhen handling a mouse click, we run this exact pipeline in reverse (The Inverse Path), carefully subtracting the offsets and dividing by the scale to figure out exactly which 1/100th of a millimeter the user clicked on.

Historically, rendering engines used integer math (tools::Long) for these transitions. If a line ended up at pixel 10.7, it was truncated to 10. For basic UI elements, this was fine.

However, modern graphics rely heavily on anti-aliasing (B2D rendering) and high-fidelity vector exports (PDFs). If you truncate a coordinate too early in the pipeline, you lose the fractional data. When you eventually scale that truncated coordinate back up, that tiny fractional loss multiplies into massive visual artifacts—lines appear to "shimmer" when scrolling, or text glyphs collide with each other.

By upgrading this pipeline to handle high-precision double math at every stage (Sub-Pixel stages), LibreOffice can pass mathematically perfect coordinates to the OS-level drawing APIs, ensuring that your documents look perfectly crisp at any zoom level.

by Chris Sherlock (noreply@blogger.com) at April 19, 2026 03:05 AM

The annual LibreOffice conference 2025 was held in Budapest, Hungary, from the 3rd to the 6th of September 2025. Thanks to the The Document Foundation (TDF) for sponsoring me to attend the conference.

As Hungary is a part of the Schengen area, I needed a Schengen visa to attend the conference. In order to apply for a Schengen visa, one needs to get an appointment at VFS Global and submit all the required documents there, which are then forwarded to the embassy.

I got an appointment for a Hungary visa at VFS Global in New Delhi for the 24th of July. There were many appointment slots available for the Hungary visa. One could easily get an appointment for the next day at the Delhi center. There were some technical problems on the VFS website, though, as I was unable to upload a scanned copy of my passport while booking the appointment. I got an error saying, “Unfortunately, you have exceeded the maximum upload limit.”

The problem didn’t get fixed even after contacting the VFS helpline. They asked me to try in the Firefox browser and deleting all the cache, which I already did.

So I created another account with a different email address and phone number, after which I was able to upload my passport and book an appointment. Other conference attendees from India also reported facing some technical issues on the VFS Hungary website.

Anyway, I went to the VFS Hungary application center as per my appointment on the 24th of July. Going inside, I located the Hungary visa application counter. There were two applicants ahead of me.

When it was my turn, the VFS staff warned me that my passport was damaged. The “damage” was on the bio-data page. All the details could be seen, but the lamination of the details page wore off a bit. They asked me to write an application to the Embassy of Hungary in New Delhi stating that I insist VFS to submit my application along with describing the “damage” on my passport.

I got a bit worried about my application getting rejected due to the “damage.” But I decided to gamble my money on this one, as I didn’t have time (and energy) to apply for a new passport before this trip.

Moreover, I had struck down a couple of fields in my visa application form which were not applicable to me, due to which the VFS staff asked me to fill out another visa application.

After this, the application got submitted, and it was 11,000 INR (including the fee to book the appointment at VFS). Here is the list of documents I submitted:

My passport

Photocopy of my passport

Two photographs of myself

Duly filled visa application form

Return flight ticket reservations

Payslips for the last three months

Invitation letter from the conference organizer (in Hungarian)

Proof of hotel bookings during my stay in Hungary

Cover letter stating my itinerary

Income tax returns filed by me

Bank account statement, signed and sealed by the bank

Travel insurance valid for the period of the entire trip

It took 2 hours for me to submit my visa application, even though there were only two applicants before me. This was by far the longest time to submit a Schengen visa application for me.

Fast-forward to the 30th of July, and I received an email from the Embassy of Hungary asking me to submit an additional document - paid air ticket - for my application. I had only submitted dummy flight tickets, and they were enough for the Schengen visas I applied for until now. This was the first time a country was asking me to submit a confirmed flight ticket during the visa process.

I consulted my travel agent on this, and they were fairly confident that I will get the visa if the embassy is asking me to submit confirmed flight tickets. So I asked the travel agent to book the flight tickets. These tickets were ₹78,000, and the airline was Emirates. Then, I sent the flight tickets to the embassy by email.

The embassy sent the visa results on the 6th of August, which I received the next day.

My visa had been approved! It took 14 days for me to get the Hungary visa after submitting the application.

See you in the next one!

Thanks to Badri for proofreading.

Maybe I’m silly. Maybe I just can’t read what they write to me (and to other Collaborans).

I read this:

The Document Foundation and the LibreOffice project are open by definition and principle to all developers. Our doors have never been closed to any of you, and they never will be.

… and I somehow feel that this means: “we at TDF have kicked you off of membership, but you are welcome to keep contributing, and to have a warm feeling about it after that”.

Open doors? I can’t even apply for membership for more than three years from now. They have officially informed me about that – this is a link to the EML with the notice from MC; it includes my reply to their original “notification”. They write:

the Membership Committee expels you from the board of trustees with immediate effect. Because you didn’t relinquished your membership immediately, we decided also considering all circumstances to block membership for at least three calendar years, thus at least up to December, 31 2029.

If I had relinquished my membership as the MC asked, I would have lost my right to challenge this “temporary inconvenience” – and I am puzzled by the claim by a board member that “in the meantime … [I] can reapply for membership as soon as the legal matters have been settled.” (https://community.documentfoundation.org/t/comment-about-collabora-blog-post-tdf-community-blog/13626/9). I can re-apply, but – it is clear I will not be accepted until 2030 (the earliest possibility). After that the “bylaws” they invented this January will prevent me from e.g. nominating to BoD for two more years. Definitely honest and welcoming. (No idea how the remaining TDF members feel about the amazing fact that the board could decide and implement a restriction like that, limiting you without asking your opinion.)

Well, enough of that. No more posts about TDF. It was nice, and I met many people during that period, that I hope I can continue to call friends; but the current policy of that thing claiming nice goals and high standards is so disgusting, that I am even glad to not have relation to that anymore. Let’s do some hacking instead!

After nearly 10 years, it’s time to start contributing to Open Source again.

My Open Spurce journey begann with breeze icons for KDE, than I added breeze icons to LibreOffice. After that I made a the complete new colibre icon theme for LibreOffice which is the default for the Windows users.

After Icon stuff I start with pressts, different visuals and User Interface related stuff like Notebookbar. Which bring me to Collabora Online Office were I fast switch to mobile toolbar and dark mode.

After my first Open Source Journey I had a long break. Which show me, that Open Source is great. Other Community members update and improve my work. I can say, it’s awesome to see the work done within the DNA of each OSS.

Now I will start again where I did my last work. Collabora Online (Desktop/Mobil/Tablet …). Why? Because I can! Thats the great benefit of OSS. Everyone can improve ist and I enjoy the Collabora Community a lot. In addition to it’s fast development, it’s that easy to make changes and contribute.

Happy Hacking on any OSS you enjoy. It would be awesome to meet you at the Collabora Community.

“Ideally, we would have preferred to avoid this post.”

When I read those opening words in Italo’s recent statement, “Let’s put an end to the speculation,” they stung. I don’t know if that specific post should have existed or not, but those first few words are a perfect reflection of the current TDF attitude. It is an attitude directed toward the very people who devoted large parts of their lives, their passion, and their hearts to the Foundation’s ideals.

What I am missing is not that specific post that Italo wrote. What I expected—what I felt I earned—was a post that looked me in the eye. I wanted an explanation as to why I am being cast out from the Trustees after everything I’ve honestly given. I wanted to know my specific “guilt,” or why the Foundation now finds “guilt by association” to be an acceptable standard.

And then—I would hope—they would publicly say: “Mike, we appreciate everything you’ve done. We deeply regret the unfortunate decisions we—not you—made over the years. But we feel this is the only path forward, and we are sorry.”

But that is the post they successfully avoided writing.

by Popa Adrian Marius (noreply@blogger.com) at April 02, 2026 08:52 AM

I came here due to a (decades-spanning, arguably perverse) love affair with the LibreOffice code body. Less so for a love of organizational bodies.

So I mostly remained passive and watched the coup d’état unfold at the Document Foundation. Where some folks apparently felt the need to have us all thrown out. Oh my.

Should I have been more involved around the apparent issues at TDF? Maybe. But then again, I’m a naive little nerd who loves fixing dysfunctional code way more than navigating dysfunctional political setups. (And to be fair, I tried to do my duty, and did serve a term on the membership committee. Back when that was likely more pleasant than what it would be today.)

Luckily, the code and the fun will most certainly live on, one way or another. Not least at https://collaboraonline.github.io/.

Happy hacking, once more,

sberg

Not too long ago, a change landed, that brought Biff12 clipboard format support in Calc v.26.2 – thanks Laurent!

It was an easyhack that I authored some time ago; and Laurent volunteered to implement that long-standing missing feature. The small detail was, that the feature was Windows-specific (it is trivial to get the wanted clipboard content there, simply copying from Excel), while Laurent developed on another platform.

Laurent had made the majority of work, before he was stuck, without being able to test / debug further changes. Then, he asked me, if there a way to continue on the platform he used.

At that time, I answered, that no, one would need Windows (and Excel) to continue the implementation. So I jumped in, and added the rest, and in the end, we have created the change in co-authorship.

But later, when part of my code turned out problematic, and I needed to fix it and create a unit test for it, I discovered a trick, that could put Biff12 data into system clipboard on any platform, without Excel – allowing then just paste, and debug everything that’s going on there. It relies on UNO API, and can be implemented e.g. in Basic:

function XTransferable_getTransferData(aFlavor as com.sun.star.datatransfer.DataFlavor) as variant

if (not XTransferable_isDataFlavorSupported(aFlavor)) then exit function

oUcb = CreateUnoService("com.sun.star.ucb.SimpleFileAccess")

oFile = oUcb.openFileRead(ConvertToURL("/path/to/biff12.clipboard.xlsb"))

dim sequence() as byte

oFile.readBytes(sequence, oFile.available()) ' changes value type of 'sequence' to integer

XTransferable_getTransferData = CreateUnoValue("[]byte", sequence)

end function

function XTransferable_getTransferDataFlavors() as variant

aFlavor = new com.sun.star.datatransfer.DataFlavor

aFlavor.MimeType = "application/x-openoffice-biff-12;windows_formatname=""Biff12"""

XTransferable_getTransferDataFlavors = array(aFlavor)

end function

function XTransferable_isDataFlavorSupported(aFlavor as com.sun.star.datatransfer.DataFlavor) as boolean

XTransferable_isDataFlavorSupported = (aFlavor.MimeType = "application/x-openoffice-biff-12;windows_formatname=""Biff12""")

end function

sub setClipboardContent

oClip = CreateUNOService("com.sun.star.datatransfer.clipboard.SystemClipboard")

oClip.setContents(CreateUNOListener("XTransferable_", "com.sun.star.datatransfer.XTransferable"), nothing)

end subThe first three functions are Basic implementation of XTransferable interface.

Running setClipboardContent will prepare the system clipboard on any platform, using a trick of implementing arbitrary UNO interface using CreateUNOListener; and after that, pasting into Calc would allow to see if things work (if content of /path/to/biff12.clipboard.xlsb is pasted, as expected), and make improvements. If I knew this trick back then, I would of course share it with Laurent; but I thought I’d put it here now, so maybe it helps me or someone else in the future. (Note that application/x-openoffice-biff-12;windows_formatname="Biff12" there in the code was the name introduced by Laurent in the discussed commit; indeed, that, and the actual data in the file, would depend on the exact format that you work with.)

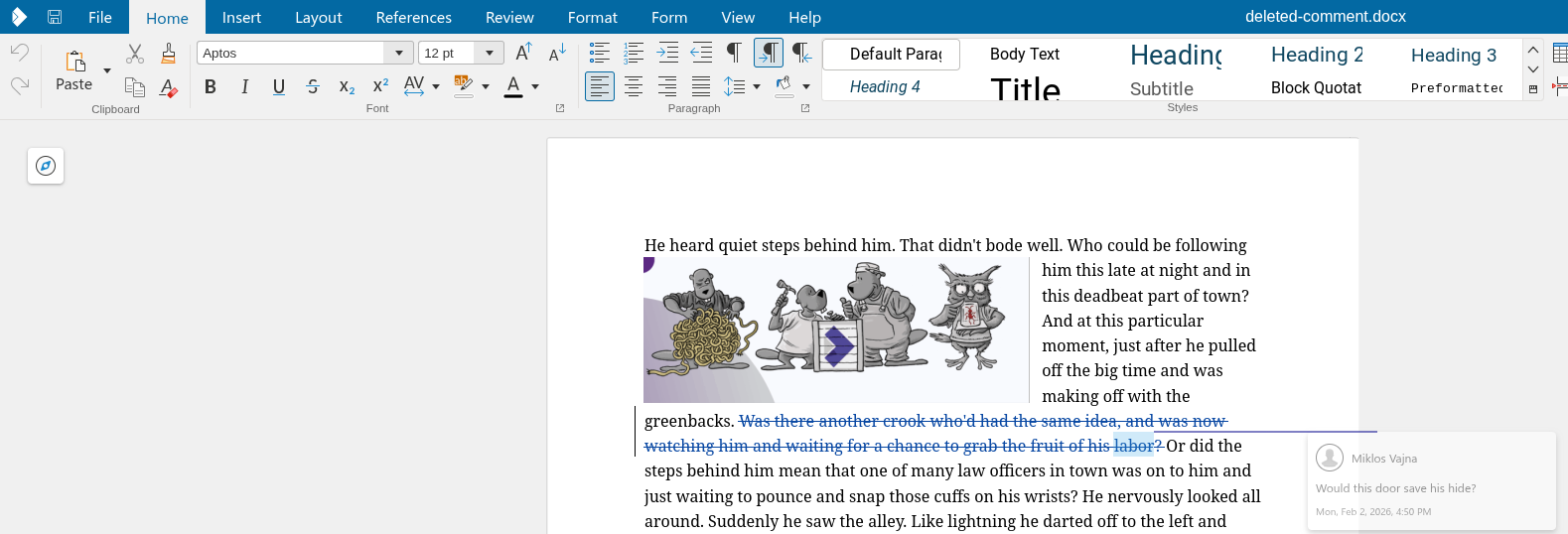

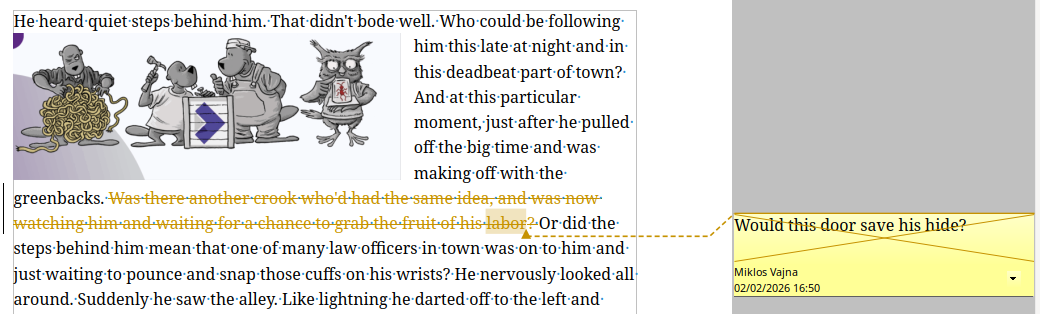

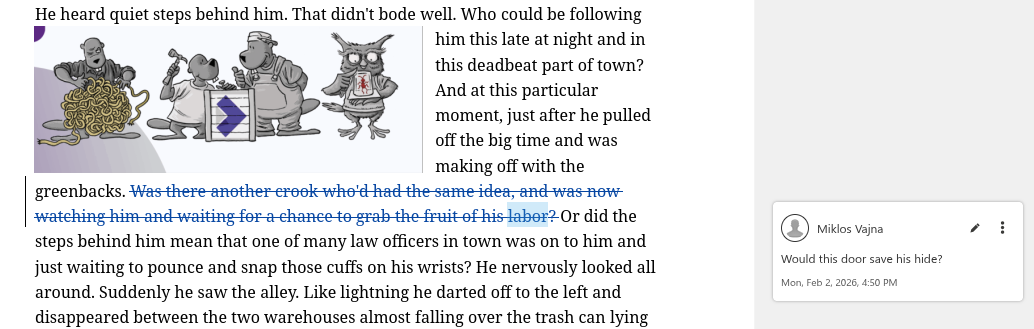

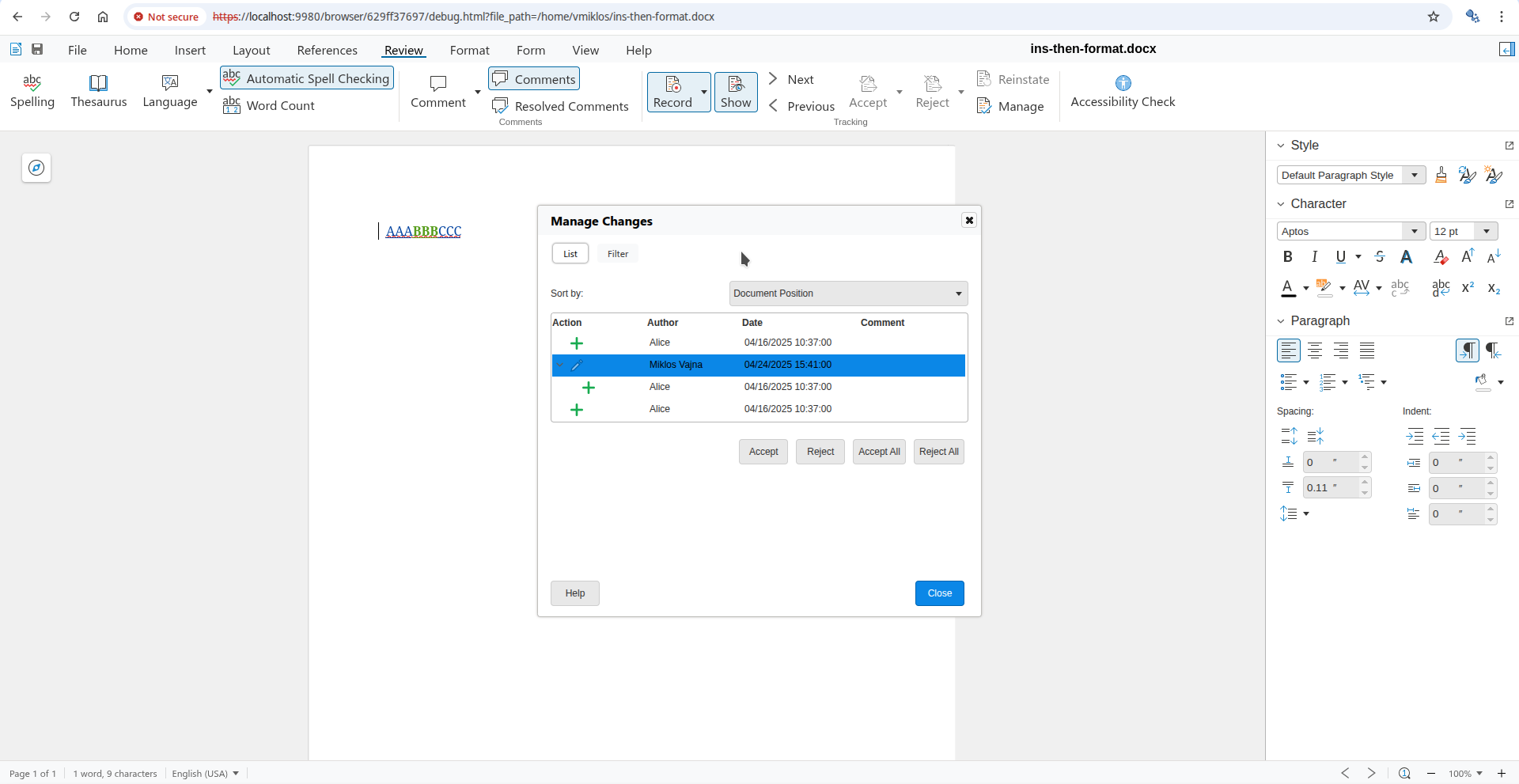

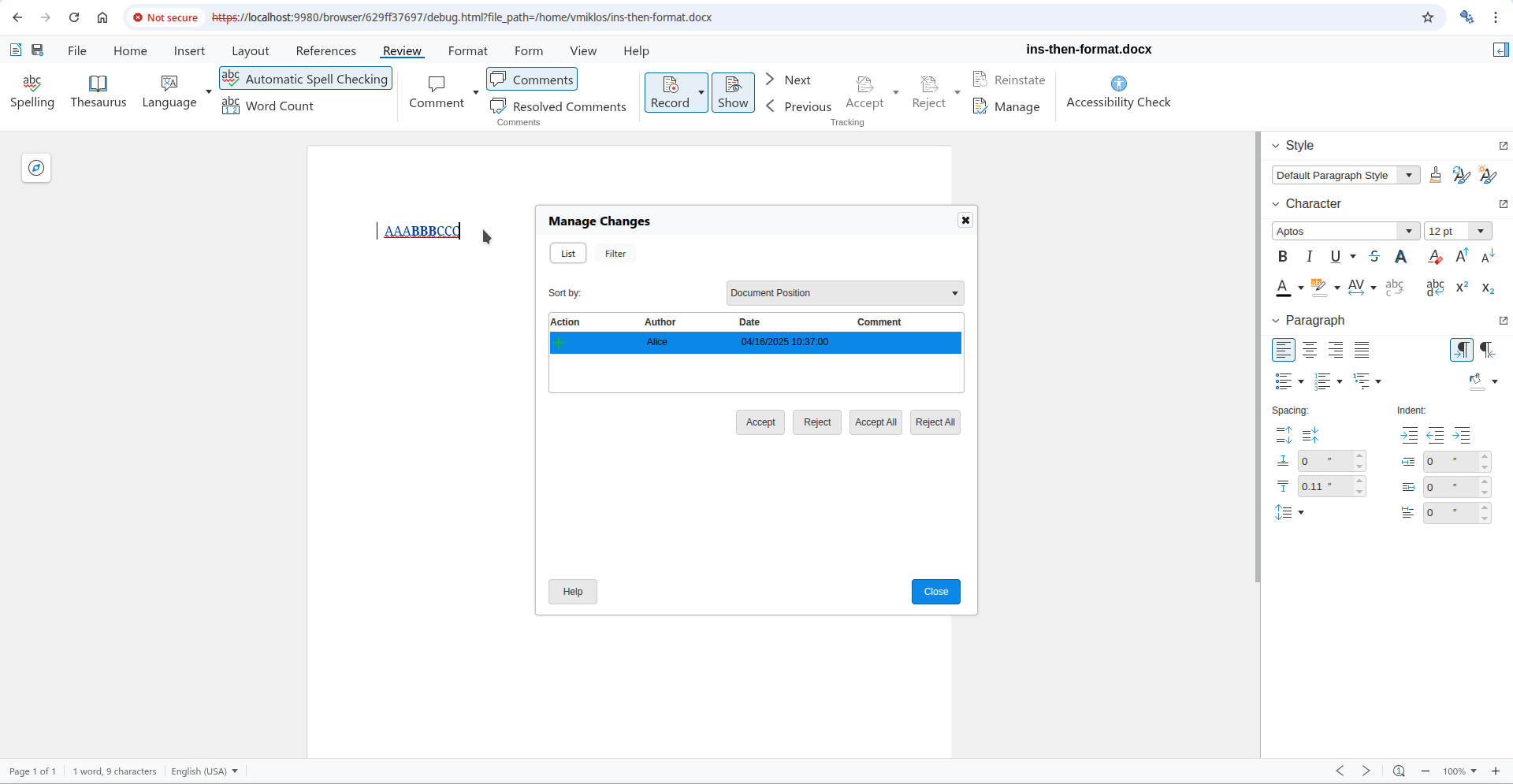

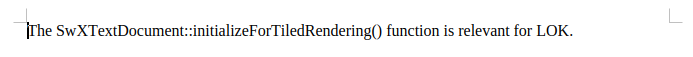

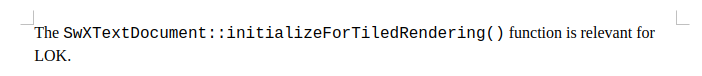

If you have a commented text range, which gets deleted while track changes is on and you later save and load this with Writer's DOCX filter, that works now correctly.

This work is primarily for Collabora Online, but the feature is available in desktop Writer as well.

It was already possible to comment on text ranges. Comments were also supported inside deletes when track changes is enabled. These could be already exported to and imported from DOCX in Writer. But you could not combine these.

With the increasing popularity of commenting text ranges (rather than just inserting a comment with an anchor), not being able to combine these was annoying.

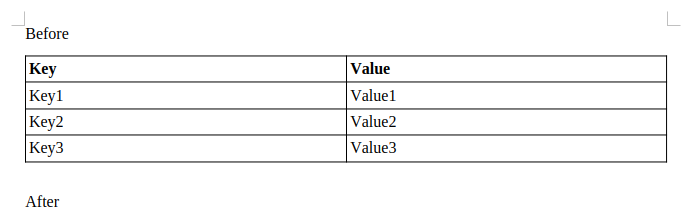

Here is how a commented text range inside a delete from DOCX now looks like, note the semi-transparent comment hinting it's deleted:

As a side effect, this also fixes the behavior in desktop Writer, which crosses out deleted comments:

In the past, the "is this deleted" property was not visible in the render result:

And it was also bad in desktop Writer:

This required changes to both DOCX import and export: a comment could be deleted or could have an anchor which is a text range, but you couldn't have both.

If you would like to know a bit more about how this works, continue reading... :-)

As usual, the high-level problem was addressed by a series of small changes. Core side:

You can get a development edition of Collabora Online 25.04 and try it out yourself right now: try the development edition. Collabora intends to continue supporting and contributing to LibreOffice, the code is merged so we expect all of this work will be available in TDF's next release too (26.8).

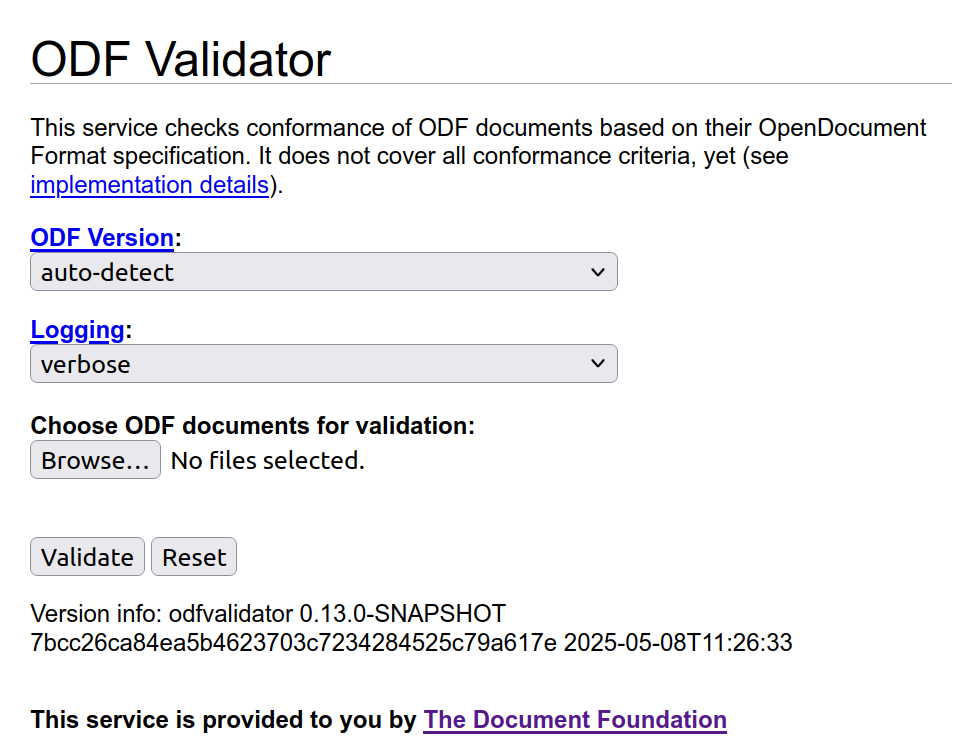

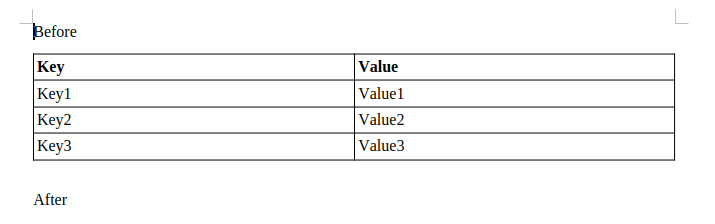

In LibreOffice development, there are many cases where you want to validate some documents against standards: either Open Document Format (ODF) or MS Office Open XML (OOXML). Here I discuss how to do that.

Update: Article updated to reflect that odfvalidator 0.13.0 has just released.

ODF is the native document file format that LibreOffice and many other open source applications use. It is basically set of XML files that are zipped together, and can describe various aspects of the document, from the content itself to the way it should be displayed. These XML files have to conform to ODF standard, which is presented in XML schemas. The latest version of ODF is 1.4, which is yet to be implemented in LibreOffice.

You can find more about ODF in these links:

There are various tools to do the validation, but the preferred one is the ODF Toolkit Validator:

Compiled binaries of ODF Toolkit can be downloaded from the above Github project:

Then, you can use the ODF validator this way:

$ java -jar odfvalidator-0.13.0-jar-with-dependencies.jar test.odt

You may also use the online validator, odfvalidator.org, to do a validation.

Please read this disclaimer before using:

This service does not cover all conformance criteria of the OpenDocument Format specification. It is not applicable for formal validation proof. Problems reported by this service only indicate that a document may not conform to the specification. It must not be concluded from errors that are reported that the document does not conform to the specification without further investigation of the error report, and it must not be concluded from the absence of error reports that the OpenDocument Format document conforms to the OpenDocument Format specification.

MS Office Open XML (OOXML) is the native standard for Microsoft documents format. It is also a set of XML files zipped together, and conform to some XML schemas.

You can find out more about OOXML here:

There are tools to do the validation, and the one is used in LibreOffice is Office-o-tron. One can use it with below command to validate an example file, test.docx:

$ java -jar officeotron-0.8.8.jar ~/test.docx

Office-o-tron can be downloaded from dev-www.libreoffice.org server of LibreOffice, and this is currently the latest version:

It is worth noting that Office-o-tron can be also used to validate ODT files.

To go beyond the current ODF standard, new features are sometimes introduced as “ODF extensions”, then are gradually added to the standard. You can read more in TDF Wiki:

In these cases, you may see validation errors for such extensions. For example:

test.odt/styles.xml[2,3347]: unexpected attribute “loext:tab-stop-distance”

test.odt/styles.xml[2,4849]: unexpected attribute “loext:opacity”

You may avoid such errors by using -e option, which ignores such unknown markups:

-e: Check extended conformance (ODF 1.2 and 1.3 documents only)

If you want to use the latest features from ODF validator, you should build ODF Toolkit from source. You can then run it with this command:

$ java -jar ./validator/target/odfvalidator-0.14.0-SNAPSHOT-jar-with-dependencies.jar test.odt

ODF Toolkit developers have recently (23 January 2026) published the new release 0.13. If you do not build from sources, you can use this new version which contains ODF 1.4 support.

When you want to make sure that the ODT or OOXML document you generate is valid according to the standards, then you need validation. Sometimes, it is the opposite: you want to make sure that the input document is valid before processing it, or when you want to know if the problem is from LibreOffice (or other processors), or the document itself. Then, again, the validator is the right tool to use.

Happy new year 2026! I hope that this year will be great for you, and the global LibreOffice community, and the software itself! I hereby discuss the past year 2025, and the outlook for 2026 in the development blog.

At The Document Foundation (TDF), our aim is to improve LibreOffice, the leading free/open source office suite that has millions of users around the world. Our work is community-driven, and the software needs your contribution to become better, and work in a way that you like.

My goal here, is to help people understand LibreOffice code easier via EasyHacks and tutorials, and eventually participate in LibreOffice core development to make LibreOffice better for everyone. In 2025, I wrote 14 posts around LibreOffice development in the dev blog (4 of them are unpublished drafts).

Focus of the development blog for 2026 in this blog will be:

You can provide feedback simply by leaving a comment here, or sending me an email to hossein AT libreoffice DOT org.

We provide mentoring support to the individuals who want to start LibreOffice development. You are welcome to contact me if you need help to build LibreOffice and do some EasyHacks via the above email address. You may also refer to our Getting Involved Wiki page:

Let’s hope a better year for LibreOffice (and the world) in 2026.

The bullet support in Impress got a couple of improvements recently, some of this is PPTX support and others are general UI improvements.

This work is primarily for Collabora Online, but the feature is available in desktop Impress as well.

Probably the most simple presentations are just a couple of slides, each slide having a title shape and an outliner shape, containing some bullets, perhaps with some additional images. Images are just bitmaps, so let's focus on outliner shapes and their outliner / bullet styles.

What happens if you save these to PPTX and load it back? Can you toggle between a numbering and a bullet? Can you return to an outliner style after you had direct formatting for your bullet?

The first case was about bullet editing of this document:

If you pressed enter at the end of 'First level', then pressed <tab> to promote the current

paragraph to the second level, nothing happened. The reason for this was that our PPTX export was

missing the list styles of shapes, except for the very first list style. And the same was missing on

the import side, too. With this, not only the rendering of the bullets are OK, but also adding new

paragraphs and using promoting / demoting to change levels work as expected.

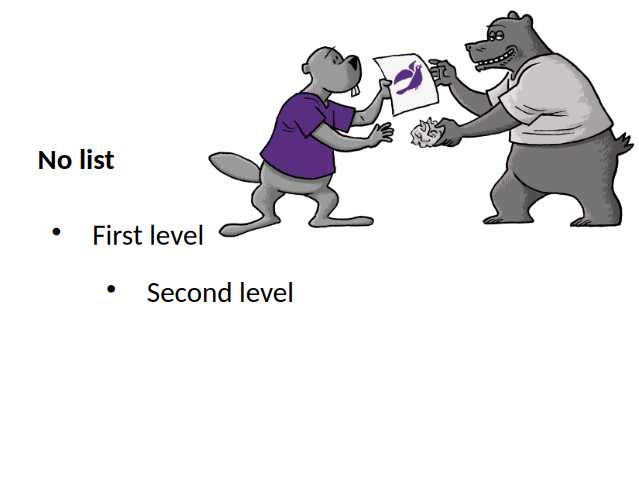

The second case was about this document, where the second level had a numbering, not a bullet:

We only had UI to first toggle off a numbering to no numbering, then you could toggle on bullets. Now it's possible to do this change in one step.

The last case was about styles. Imagine that you had a master page with an outline shape and some reasonably looking configuration for the first and second levels as outline styles:

Notice how the last paragraph has a slightly inconsistent formatting, due to direct formatting. Let's fix this.

Go to the end of the last bullet, which is currently not connected to an outline style, toggle bullets off and then toggle it on again. Now we clear direct formatting when we turn off the bullet, so next time you turn bullets on, it'll be again connected to the outline style's bullet configuration and the content will look better.

Note how this even improves consistency: Writer was behaving the same way already, when toggling bullets off and then toggle on again resulted in getting rid of previously applied unwanted direct formatting.

If you would like to know a bit more about how this works, continue reading... :-)

As usual, the high-level problem was addressed by a series of small changes. Core side:

FN_TRANSFORM_DOCUMENT_STRUCTURE handling to a new functionYou can get a development edition of Collabora Online 25.04 and try it out yourself right now: try the development edition. Collabora intends to continue supporting and contributing to LibreOffice, the code is merged so we expect the core of this work will be available in TDF's next release too (26.2).

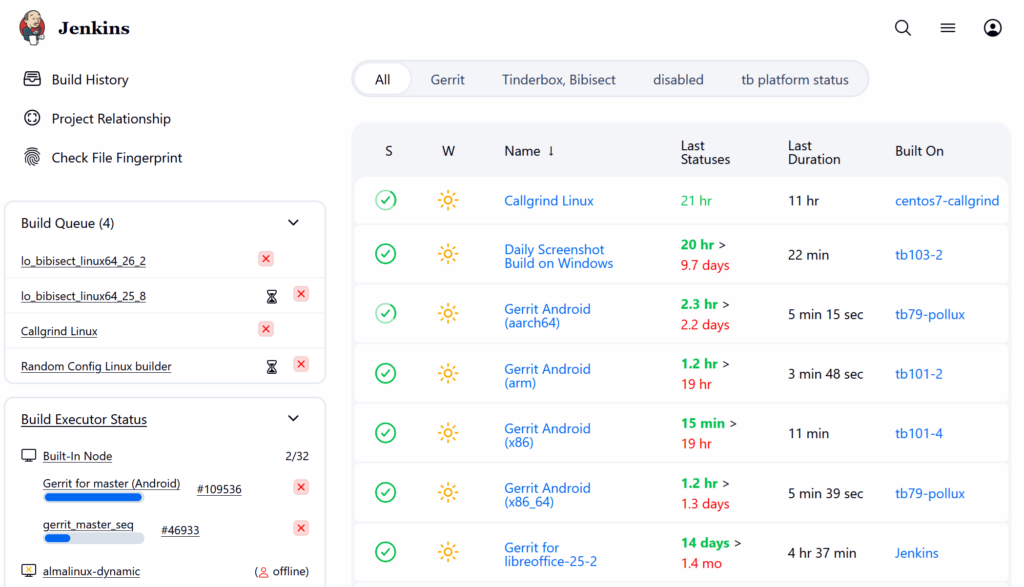

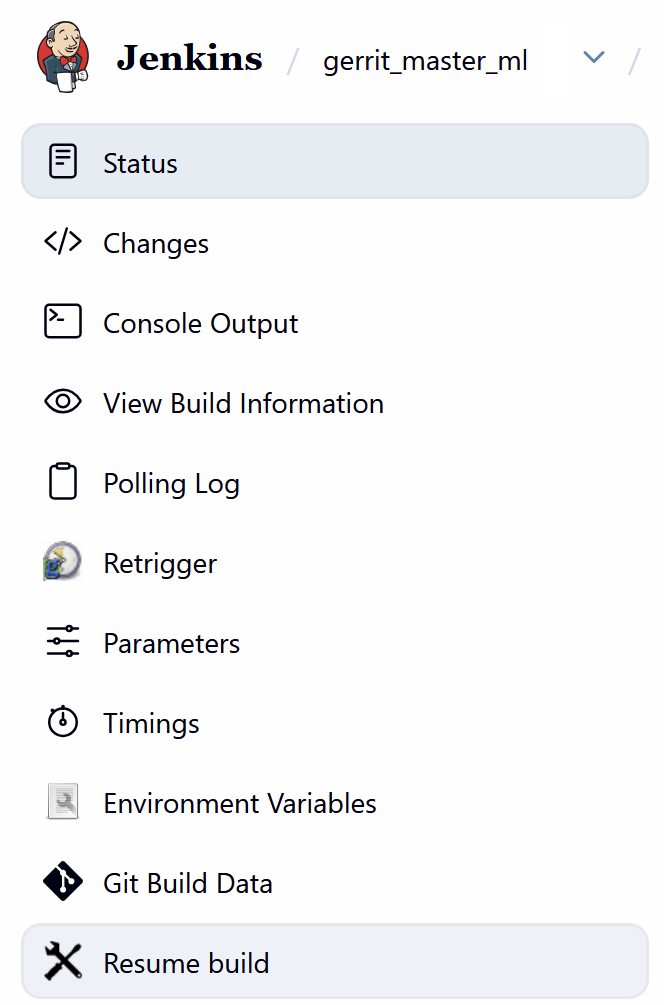

After submitting a patch to LibreOffice Gerrit, one has to wait for the continuous integration (CI) to build and test the changed source code to make sure that the build is OK and the tests pass successfully. Here we discuss the situation when one or more CI builds fail, and how to handle that.

After you submit code to LibreOffice Gerrit, reviewers have to make sure that it builds, and the tests pass with the new source code. But, it is not possible for the reviewers to test the code on each and every platform that LibreOffice supports. Therefore, Jenkins CI does that job of building and testing LibreOffice on various platforms.

This can take a while, usually 1 hour or so, but sometimes can take longer than that. If everything is OK, then your submission will get Verified +1 .

Currently, these are the platforms used in CI:

gerrit_linux_gcc_releasegerrit_linux_clang_dbgutilgerrit_android_x86_64 and gerrit_android_armgerrit_windows_wslgerrit_macSome of the tests are more extensive, for example Linux / Clang also performs additional code quality checks with clang compiler plugins. Also, UITests are not run on each and every platform.

There can be multiple reasons for why a CI build fails, and give your submission Verified -1 . These are some of the reasons, and depending on the reason, solution can be different.

1. Your code’s syntax is wrong and compile fails

In this case, you should fix your code, and then submit a new patch set. You have to wait again for a new CI build.

2. The code’s syntax is OK, but it is not properly formatted

You should refer to the below TDF Wiki article and use clang-format tool to format your code properly.